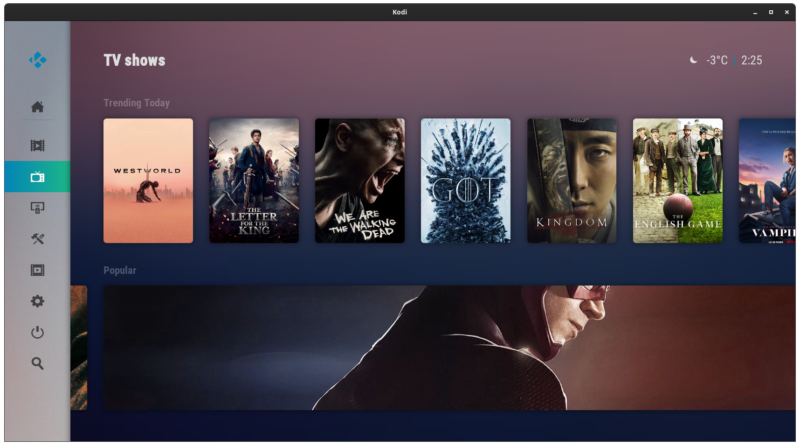

I was trying to run Kodi and some other GUI apps from LXD containers on Archlinux but had some small problems, so I decided to document it in case I need it again in the future. The same approach should work for Docker and other types of Linux containers.

UID mapping

When it comes to running containers, it is recommended to use user namespace remapping (man subuid) to have different uid ranges for different cgroups. This way container processes are better isolated.

In cases where you use containers to simply run different software versions and don’t care about the added security of namespace remapping, you can allow reusing the same UIDs inside the container. This allows easy mounting of host directories inside the container without the need of any additional work to deal with file permissions.

So if you want to reuse the same user ID, append additional mapping lines to subuid/subgid files:

echo "root:$UID:1" | sudo tee -a /etc/subuid /etc/subgidCreate container

lxc launch images:archlinux/current/amd64 guiIf you decided to use specific UID mapping, configure container to use it

lxc config set gui raw.idmap "both $UID 1000"For Kodi to use hardware accelerated graphics, we want to share GPU with the container:

lxc config device add gui mygpu gpu

lxc config device set gui mygpu uid 1000

lxc config device set gui mygpu gid 1000In my case the host is running graphics server so we have to share the Xorg socket with container to be able to draw on it:

lxc config device add gui X0 proxy connect=unix:/tmp/.X11-unix/X0 listen=unix:/tmp/.X11-unix/X0 bind=container uid=1000 gid=1000 mode=0666Permanently add DISPLAY variable to the container, then reboot the container

lxc config set gui raw.lxc 'lxc.environment = DISPLAY=:0'You must install the same version of graphics drivers on the container. In case where both host and container is Archlinux or open source drivers are used, it’s taken care of automatically, but in case you want to use Ubuntu container, and you have Nvidia card with proprietary drivers, you have to install them in the container.

sudo apt-get install ubuntu-drivers-common software-properties-common

sudo add-apt-repository ppa:graphics-drivers/ppa

sudo apt-get update

sudo ubuntu-drivers devices

sudo apt-get install nvidia-driver-450Allow access to Xorg on host

Not being able to access host Xorg was the reason why it didn’t work for me from the start. You have to allow connecting to X on host even when using the unix socket:

xhost +local:guigui is the hostname of the container

This has to be run each time X is restarted (unless configured permanently).

Using xhost + disables permission checking altogether and can be useful while testing. Run man xhost to see other configuration options.

Add audio support

Mount pulseaudio socket to the container to be able to play audio

lxc config device add gui PASocket proxy connect=unix:/run/user/1000/pulse/native listen=unix:/tmp/.pulse-native bind=container uid=1000 gid=1000 mode=0666Install pulseaudio

sudo pacman -S pulseaudioInside the container make pulseaudio use the already mounted socket

sed -i "s/; enable-shm = yes/enable-shm = no/g" /etc/pulse/client.conf

echo export PULSE_SERVER=unix:/tmp/.pulse-native | tee --append /home/ubuntu/.profileNow pactl info in the container should show that it is connected

Test it

Connect to container and make sure you have your /tmp/.X11-unix/X0 socket available. Then try running some program.

If you get this:

No protocol specified

Error: couldn't open display :0… then you have to adjust the permissions with xhost on the host.

Notes

This is probably going to be impossible with Wayland out of the box but it is not like Wayland is really production ready, even in 2020. 🙂

References

How to easily run graphics-accelerated GUI apps in LXD containers on your Ubuntu desktop